Most UK businesses do not lose money on AI because they chose the wrong model. They lose money because they started building too soon.

When teams skip audit work, the same pattern shows up: unclear scope, duplicated effort, weak data inputs, and output quality problems that only become visible after launch. By then, you are already paying for rework, stakeholder friction, and timeline drift.

A practical AI audit avoids that. It gives you a clear view of where AI can actually create measurable value, where the risks sit, and what needs fixing before development begins.

This guide breaks down exactly what an effective AI audit should include before any build starts, with a commercial lens for SME and mid-sized UK organisations.

What is an AI audit in plain terms?

Definition: An AI audit is a structured assessment of your commercial goals, workflows, data, systems, governance, and delivery capability to determine what should be built, in what order, and under which controls.

It is not a generic workshop, and it is not a technical deep dive for its own sake. It is a decision framework that answers five practical questions:

- What should we do first?

- What should we avoid?

- What will this cost in time, money, and internal effort?

- How will we measure success?

- What controls are required before release?

If your team currently has ideas but no clear order of execution, this is where an audit creates immediate clarity. If needed, this can be scoped through a focused AI audit service rather than a long strategy phase.

Why auditing before build is commercially smarter

Starting with build sounds fast, but in most cases it is slower to value. An audit reduces avoidable waste by catching issues before engineering starts.

What it prevents:

- building automations around broken processes,

- launching pilots without accountable ownership,

- using data that cannot support stable output quality,

- failing compliance checks late in the project,

- and reporting vanity metrics instead of business outcomes.

For most businesses, the cheapest phase to correct direction is before implementation. After build starts, every adjustment costs more.

The 10 components every pre-build AI audit should include

1) Commercial objective mapping

Every audit should start with outcomes, not tools. You need explicit commercial targets and baseline metrics before use-case selection.

Minimum audit outputs:

- Top 3 business outcomes for the next 90 days.

- Baseline metrics for each outcome.

- Expected value range (best case / likely / conservative).

Examples include reducing proposal turnaround time, improving qualified lead rate, shortening service-delivery cycles, or reducing repetitive admin hours.

2) Workflow and process diagnostics

AI should improve workflows, not paper over process ambiguity. The audit should map current-state delivery and identify friction points by stage.

Look for:

- handoffs that create delays,

- manual repetition with no quality gain,

- approval bottlenecks,

- and tasks where human review must remain mandatory.

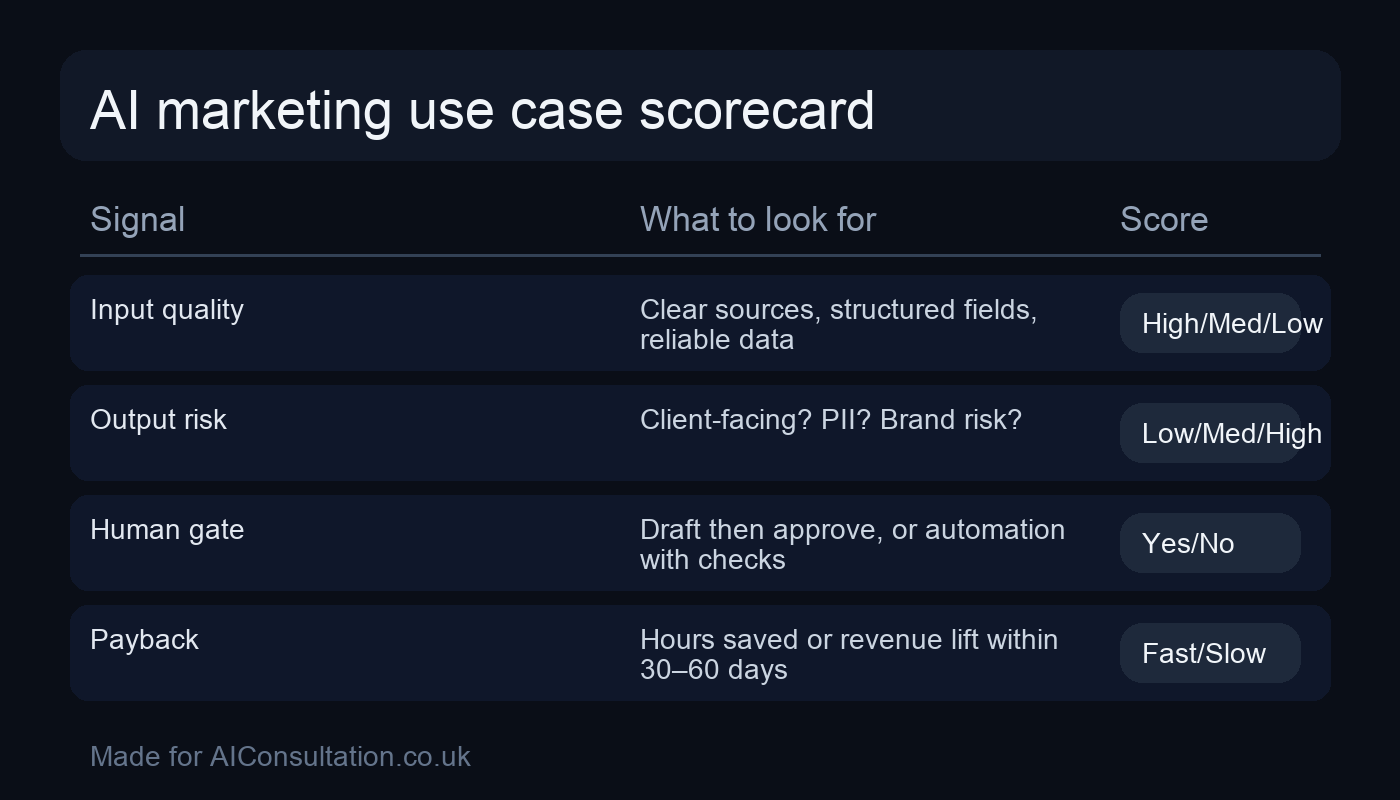

3) Use-case prioritisation model

Not all AI opportunities deserve equal attention. The audit should score use cases by impact, effort, risk, and implementation readiness.

A simple scoring framework often includes:

- Impact: revenue, margin, retention, or productivity effect,

- Feasibility: data availability, process clarity, team capability,

- Risk: compliance exposure, brand risk, operational dependency,

- Time-to-value: how quickly measurable benefit appears.

Where teams need broader implementation support beyond prioritisation, this usually links into practical AI services across strategy, delivery, and optimisation.

4) Data readiness assessment

Most AI performance issues are data and process issues in disguise. The audit should evaluate whether the data is usable for the proposed tasks.

Audit checks:

- data source inventory and ownership,

- field consistency and completeness,

- duplication and freshness risks,

- access model and permission constraints.

Perfection is not required, but unknown quality is expensive. The output should clearly state which use cases are viable now and which require data remediation first.

5) Technical architecture and integration review

The audit should define how outputs flow into existing systems, not just what a model can produce in isolation.

Questions to answer:

- How will AI outputs enter CRM, project tools, and reporting?

- What APIs, connectors, or middleware are required?

- What reliability and fallback behaviour is needed?

- Which integrations are phase one versus phase two?

If integration design is not explicit during audit, implementation timelines usually slip.

6) Governance, compliance, and risk controls

An audit is where risk boundaries become operational policy. Teams need clear rules they can actually follow under day-to-day pressure.

Minimum governance outputs:

- data handling policy (what is allowed, prohibited, restricted),

- human review checkpoints by workflow type,

- accuracy and traceability standards,

- incident escalation path.

For UK organisations handling personal data, align controls with regulatory expectations such as ICO guidance on AI and data protection: ICO AI guidance.

If your sector has elevated risk requirements, incorporate an explicit AI compliance workstream before production rollout.

7) Capability and operating model assessment

Tooling decisions are only half the picture. The audit should assess whether the team can run and maintain AI-enabled workflows once they are live.

Assess:

- role clarity and ownership,

- training needs by function,

- who reviews and signs off outputs,

- where support and troubleshooting live.

In many SMEs, the practical model is central standards plus distributed execution by department leads.

8) Economic model and delivery plan

An audit should produce a realistic cost and timeline model, not generic assumptions.

Include:

- phase-by-phase effort (internal and external),

- licensing and infrastructure assumptions,

- delivery dependencies and risks,

- 90-day execution roadmap with milestones.

A credible plan includes what will not be delivered in phase one. Scope discipline is a performance lever.

9) KPI and measurement framework

Pre-build audit work should define exactly how success will be measured before launch.

Good measurement includes:

- primary business KPI (e.g., conversion quality, cycle-time reduction),

- supporting quality KPI (error rate, rework rate),

- risk KPI (policy breaches, approval exceptions),

- adoption KPI (active usage by target teams).

Without this, teams optimise for activity rather than impact.

10) Go / no-go decision gates

The audit should end with explicit delivery decisions:

- Go now: ready to implement immediately,

- Go with conditions: proceed after specific fixes,

- No-go: defer until constraints are resolved.

This prevents politically driven launches and keeps execution tied to readiness.

What a strong AI audit deliverable pack should look like

Before any build starts, you should have a concise pack that leadership can actually use:

- executive summary with priorities and risks,

- ranked use-case backlog with scoring rationale,

- workflow maps and control points,

- data readiness summary with remediation actions,

- governance checklist and approval model,

- 90-day implementation plan with owners and milestones,

- KPI dashboard specification for launch review.

If your audit output is 60 slides with no decision logic, it is not production-ready.

Common mistakes businesses make during AI audits

Mistake 1: Treating audit as a “pre-sales deck”

Audit quality drops when the output is designed to sell complexity instead of reducing it. Your audit should simplify decisions, not obscure them.

Mistake 2: Ignoring operational ownership

Technical recommendations without named owners fail in execution. Ownership must be explicit per workstream.

Mistake 3: Under-scoping governance

Many teams leave policy work until late-stage deployment. That usually creates avoidable blockers right before launch.

Mistake 4: No baseline metrics

Without baseline data, ROI claims become opinions. Capture baselines early.

Mistake 5: Trying to audit everything at once

Good audits are scoped. Start with high-value workflows and expand in phases.

A practical 2-week pre-build audit structure

For many SMEs, a focused two-week structure works well:

- Days 1–2: Objectives, stakeholder interviews, workflow selection.

- Days 3–5: Process mapping, data review, use-case scoring.

- Days 6–8: Risk and governance design, integration planning.

- Days 9–10: Delivery roadmap, KPI framework, go/no-go decisions.

This is enough to make confident build decisions without months of delay.

When moving from audit to implementation, clearly scoped AI development services can then execute in phases with less rework and stronger control.

FAQ

How long should an AI audit take before development begins?

Most SME audits take one to three weeks depending on workflow complexity, data quality, and stakeholder availability.

Do we need technical teams involved in the audit stage?

Yes, but not only technical teams. Commercial owners, operations leads, and compliance stakeholders should all contribute because AI success is cross-functional.

What is the difference between an AI audit and an AI strategy document?

An audit is evidence-based and implementation-oriented. It validates readiness, prioritises use cases, and defines controls. Strategy sets direction; audit determines execution readiness.

Can we run an AI audit without committing to immediate build?

Yes. In fact, that is often the point. A good audit gives you confidence on what to do now, later, or not at all.

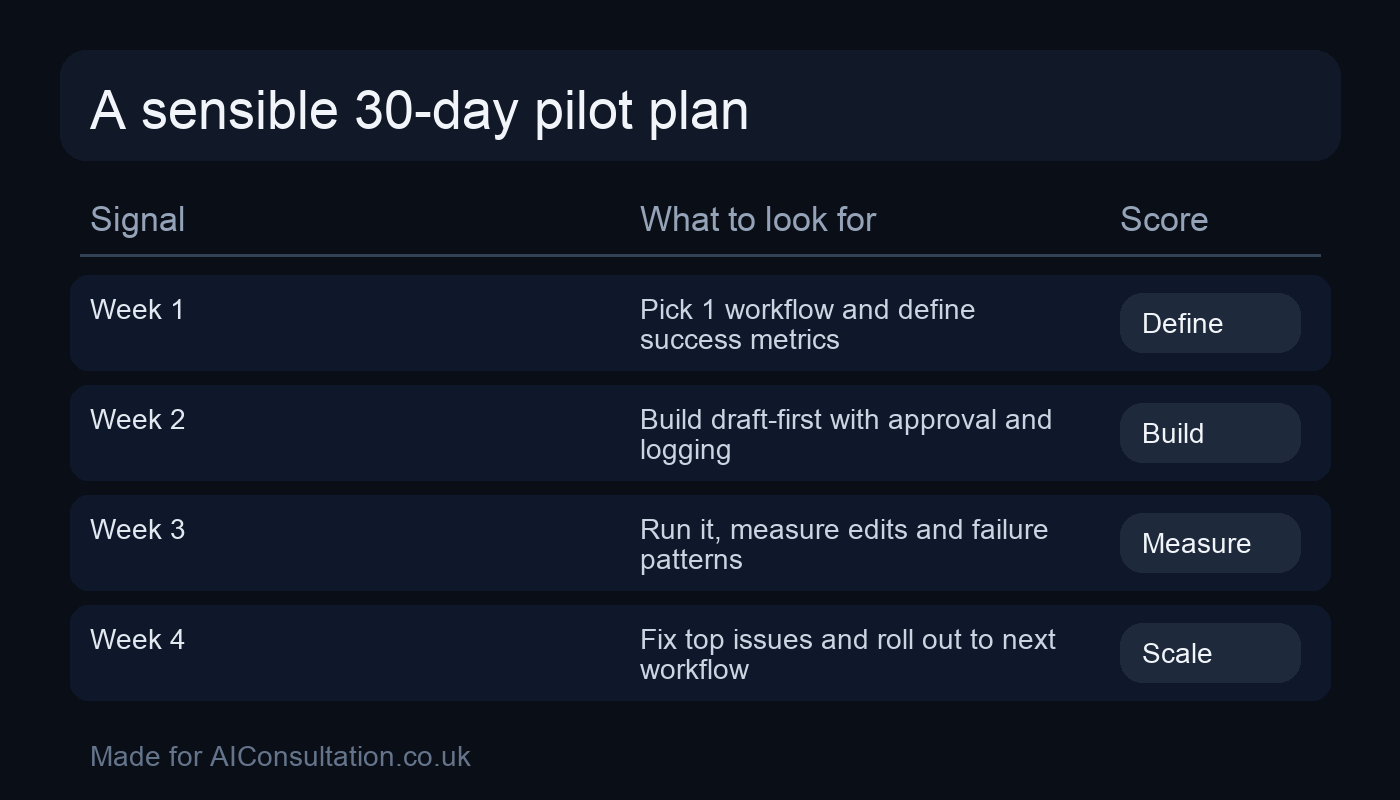

What should we do immediately after the audit?

Pick one or two high-scoring use cases, assign accountable owners, finalise controls, and launch a tightly scoped pilot with measurable KPIs.

Next step

If you want AI projects that deliver outcomes instead of extra admin, start with a pre-build audit that turns assumptions into decisions. We can help you scope the right first phase, define controls, and move into delivery with confidence. Explore your options via contact us and we will map the next 90 days around practical business impact.