Most AI projects do not fail because the model was weak. They fail because the business was not ready to use it in day-to-day operations.

Teams often buy tools before they have clear ownership, usable data, a practical workflow, or a way to measure impact. Six weeks later, everyone says “AI is interesting”, but nothing has changed commercially.

This guide gives you a practical AI readiness checklist for SMEs so you can move from experimentation to results. It is designed for business owners, directors, and heads of department who want sensible progress without chaos.

If you are at the stage of deciding where AI fits, start with an honest discovery process first. A structured AI audit usually saves far more budget than it costs because it helps you avoid low-value pilots.

What “AI readiness” actually means in practice

Definition: AI readiness is your organisation’s ability to adopt AI safely, usefully, and repeatedly in core workflows with measurable outcomes.

It is not just technical readiness. It includes leadership decisions, process design, data quality, governance, team capability, and change management. If one of those layers is weak, performance drops quickly.

For SMEs, readiness is not about creating an enterprise “AI department” overnight. It is about building enough structure to run focused projects that deliver:

- clear business impact,

- acceptable risk,

- adoption by real people,

- and repeatability beyond a single pilot.

The AI readiness checklist for SMEs

Use this as a working checklist with your leadership team. Score each area Red/Amber/Green. Red means “not in place”, Amber means “partly in place”, Green means “ready to execute”.

1) Business outcomes are defined before tools are chosen

Start with outcomes, not platforms. The most common mistake is buying software because competitors are talking about it.

Check:

- Have you defined one to three priority outcomes for the next 90 days?

- Are these outcomes tied to revenue, margin, retention, or productivity?

- Do you have a baseline metric for each one?

Example outcomes:

- Reduce average proposal turnaround from 5 days to 2 days.

- Increase qualified lead-to-meeting rate by 15%.

- Cut repetitive admin time in operations by 20%.

If outcomes are vague (“be more innovative”), your implementation will drift.

2) You have an accountable owner for each AI initiative

Shared ownership creates silent failure. Every initiative needs one accountable owner with authority to make trade-offs.

Check:

- Is there a named owner for each initiative?

- Does that owner control the timeline and decision-making?

- Is there executive sponsorship for blockers that cross teams?

In SMEs, this owner is often a Head of Ops, Marketing Lead, or Managing Director depending on use case. The role is not “technical lead”; it is “commercial owner”.

3) Priority workflows are mapped before automation starts

You cannot improve a process you have not documented. Teams often automate messy workflows and lock in inefficiency.

Check:

- Have you mapped the current workflow end-to-end?

- Do you know where delays, handoffs, and errors happen?

- Have you identified which steps should remain human?

At this stage, keep it simple: one page, clear stages, owners, inputs, outputs, and QA points.

4) Your data is good enough for the intended use case

Perfect data is not required, but “unknown quality” is a risk multiplier. If data is inconsistent, stale, duplicated, or inaccessible, model outputs become unreliable.

Check:

- Do you know where source data lives?

- Are naming conventions and key fields reasonably consistent?

- Can the team access data without manual exports every day?

For many SMEs, the first win is cleaning critical fields in CRM and standardising document templates. That alone improves AI output quality materially.

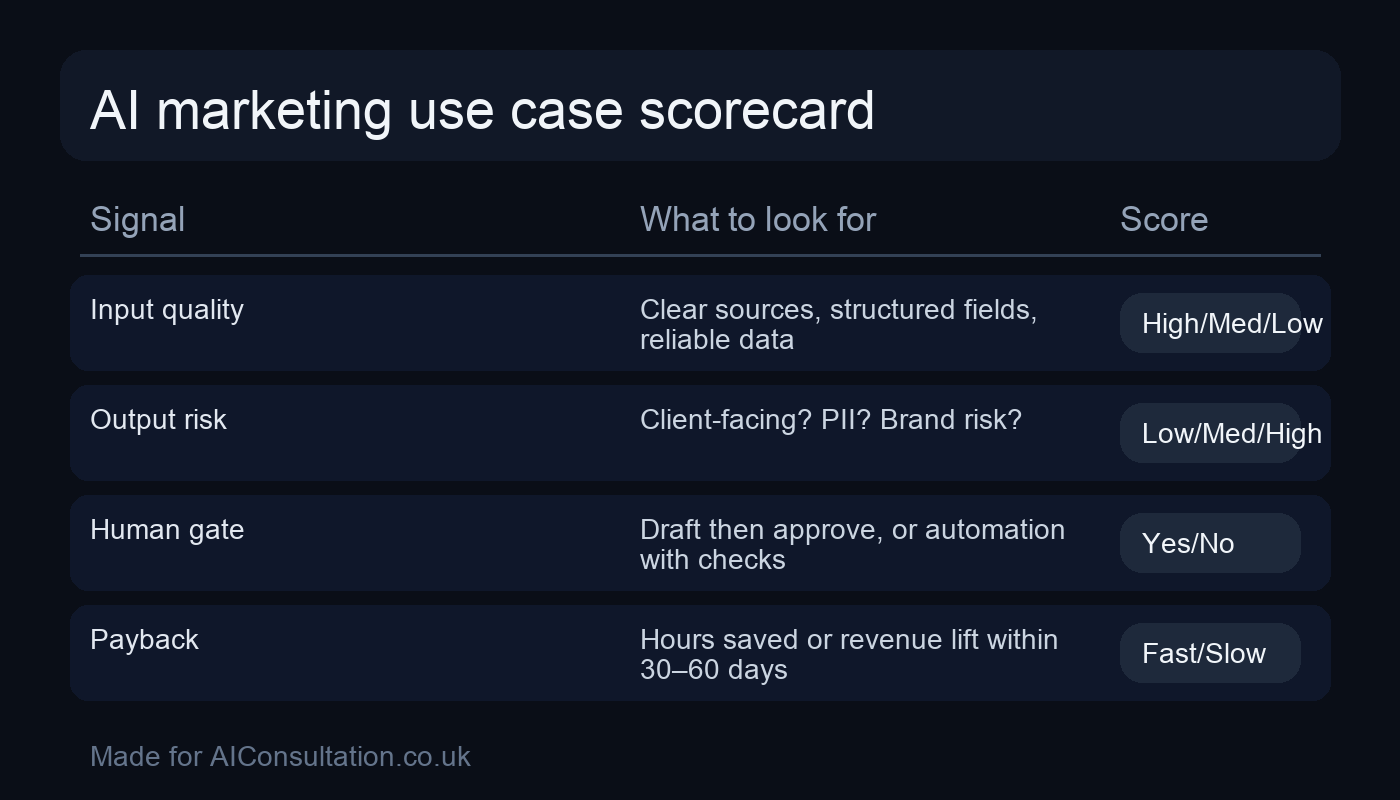

5) Risk boundaries and compliance rules are explicit

Without clear boundaries, teams either overuse AI in risky scenarios or avoid it entirely. You need practical rules that people can follow under time pressure.

Check:

- Have you defined what data is prohibited in public tools?

- Do teams know which tasks require human approval?

- Do you have minimum standards for accuracy and review?

For UK businesses handling personal data, align your policy with established principles from the Information Commissioner’s Office AI guidance. Keep controls practical, not theoretical.

If your team needs implementation support across policy and deployment, a scoped AI compliance plan is usually the fastest route to safe rollout.

6) Prompting and quality standards are documented

Most teams rely on ad-hoc prompting. Results vary wildly by individual, which makes output quality unpredictable.

Check:

- Do you have role-specific prompt frameworks?

- Is there a shared standard for tone, structure, and factual checks?

- Are examples of “good” and “bad” outputs available?

Even a basic internal “prompt playbook” for sales, delivery, and marketing removes rework and improves consistency quickly.

7) Human-in-the-loop review is built into key outputs

AI should reduce effort, not remove accountability. High-impact outputs still need clear human review gates.

Check:

- Which outputs require mandatory review before release?

- Who signs off by workflow stage?

- Are rejection reasons tracked so prompts/processes improve?

Typical review-critical outputs include contracts, regulated messaging, pricing changes, legal claims, and customer-facing recommendations.

8) Team capability is planned, not assumed

Readiness is a people issue. If only one person understands the toolchain, adoption collapses when that person is unavailable.

Check:

- Have you identified who needs what training?

- Do managers know how to coach AI-assisted workflows?

- Is there a simple route for support questions?

For SMEs, short role-based sessions beat broad “AI awareness” workshops. Train the people who will use the workflow every day.

9) Delivery stack is practical and integrated with your systems

Tool sprawl kills momentum. A smaller, well-integrated stack almost always outperforms a long list of disconnected apps.

Check:

- Have you selected tools based on workflow fit, not feature hype?

- Can outputs move into CRM, project management, and reporting tools?

- Are licensing, security, and access controls managed centrally?

Keep the stack boring where possible. Reliability creates confidence, and confidence drives adoption.

10) KPI tracking and review cadence are in place

You need evidence that AI is improving outcomes, not just activity.

Check:

- Do you track before/after performance against baseline?

- Are quality and risk metrics tracked alongside speed metrics?

- Is there a fortnightly review meeting to decide continue, adjust, or stop?

A practical KPI set usually includes productivity, error rate, cycle time, and commercial impact. Keep it small and action-oriented.

How to score your readiness in 30 minutes

Run this with your leadership team once per quarter:

- Print the 10 checklist areas above.

- Score each area Red/Amber/Green independently.

- Compare scores and discuss differences.

- Select the top three “Reds” blocking delivery.

- Assign owners, deadlines, and first actions.

This takes less than an hour and gives you a realistic execution plan. It also surfaces hidden blockers early, before they burn budget.

Common readiness traps that cost SMEs time and money

Trap 1: Treating AI as a marketing experiment only

Marketing is often the first department to adopt AI, which is fine, but if adoption stays siloed, broader operational value is missed.

Trap 2: Chasing “one big platform” to solve everything

There is no single tool that fixes weak process design, unclear ownership, and inconsistent data. Sequence matters more than software branding.

Trap 3: Measuring usage instead of outcomes

“Team members used AI 300 times this week” is not a business metric. Measure conversion quality, margin, retention, and time-to-delivery improvements.

Trap 4: Underestimating change management

People need confidence, examples, and clear boundaries. Without this, adoption stalls after initial excitement.

What good readiness looks like after 90 days

When SMEs apply this checklist properly, the first 90 days usually show clear signs of progress:

- one or two workflows are running with clear owners,

- quality gates are defined and followed,

- team confidence rises because rules are clear,

- and leadership can see measurable impact, not just tool activity.

From there, scaling becomes a planning question, not a panic reaction.

If you want a practical implementation path, this is where many teams move from exploratory work to scoped AI development services that connect directly to operational systems.

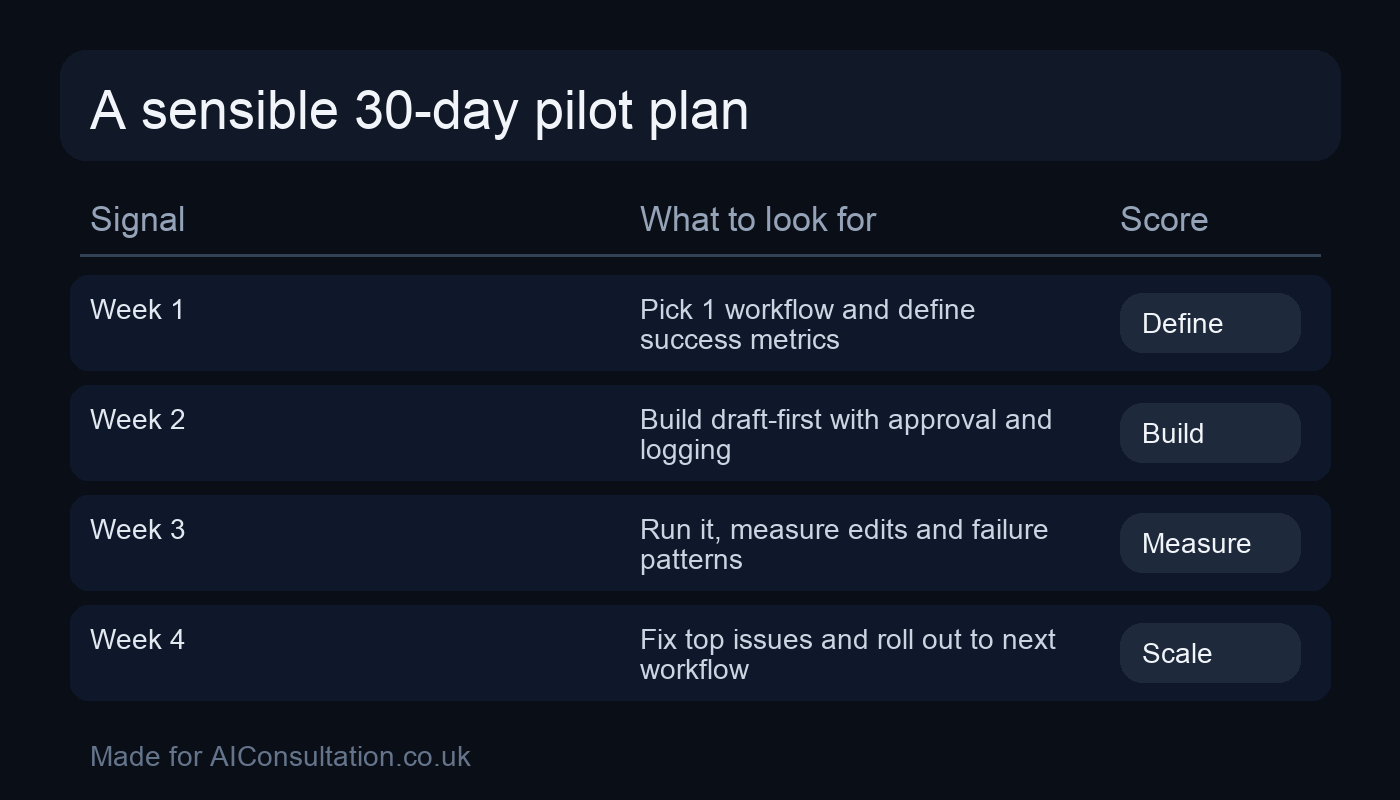

Quick-start action plan for this week

If you want momentum now, do this in order:

- Pick one commercially relevant workflow to improve.

- Map current state and define the target KPI.

- Assign one accountable owner.

- Set risk boundaries and mandatory review points.

- Run a two-week pilot with a fixed review cadence.

This approach is deliberately simple. Simplicity improves execution.

FAQ

How do I know if my SME is ready for AI?

You are ready when you have clear outcomes, named ownership, mapped workflows, baseline metrics, and practical risk controls. You do not need perfect systems; you need enough structure to run safe, measurable pilots.

What is the first AI use case most SMEs should prioritise?

Prioritise a workflow with high repetition, clear steps, and measurable value, such as lead qualification support, proposal drafting, or service delivery admin. Avoid complex edge cases first.

How long does an AI readiness phase usually take?

For most SMEs, a focused readiness phase takes two to six weeks depending on process complexity, data quality, and stakeholder availability.

Do we need to hire AI specialists before starting?

Not always. Many SMEs start with internal owners plus targeted external guidance. The key is role clarity, not immediate headcount expansion.

Can we improve readiness without changing all our current tools?

Yes. In most cases, process mapping, quality standards, and governance improvements deliver early gains before major tool changes are needed.

Next step

If you want to turn AI from “interesting” into operational advantage, begin with a readiness-first plan rather than another random pilot. A focused strategy session can quickly show which workflows are worth prioritising and what controls you need to scale safely. Explore practical options through our AI services or speak to us via contact to scope your next 90 days.