Choosing the right AI marketing use cases isn’t about finding the “sexiest” tool or the boldest automation. It’s about picking a handful of outcomes where (a) the data is available, (b) the workflow has repeatable steps, (c) the risk is acceptable, and (d) you can prove impact quickly. Most UK businesses don’t fail at AI because the models are weak; they fail because they select use cases that are politically popular, technically awkward, or impossible to measure.

This guide is a practical method you can use to decide what to build, buy, or pilot first—without turning your marketing department into a science project. You’ll also see examples that work well in the real world (and the ones that look good on a slide deck but usually disappoint).

Start with outcomes, not tools

“We should use AI” is not a brief. A good brief sounds like: “reduce time-to-publish by 30% without losing quality”, “increase qualified leads from existing traffic”, or “stop wasting spend on low-intent queries”.

Before you list any AI ideas, write down the marketing outcomes you’d happily pay for in three months’ time. In most SMEs, they’ll fall into one of these buckets:

- Efficiency: less manual work, fewer handoffs, faster production.

- Effectiveness: better targeting, stronger creative, higher conversion rates.

- Consistency: brand voice, reporting cadence, QA, governance.

- Risk reduction: compliance checks, spend safeguards, fewer mistakes.

If you want help mapping this to a workable delivery plan (discovery → pilot → rollout), it’s usually worth starting with an AI audit rather than jumping straight to tooling.

What makes a marketing use case “AI-ready”?

The most successful AI marketing use cases share a few traits. You can treat these as a checklist:

- Clear inputs: you can define what the system needs (briefs, product data, historical performance, brand rules).

- Clear outputs: you know what “good” looks like (a report, a draft, a list of keywords, a set of ads, a prioritised backlog).

- Feedback loop: there’s a way to score or review outputs so the system improves (human review, performance data, QA rules).

- Repeatability: the workflow happens weekly or daily, not once a year.

- Measurability: you can attach KPIs (time saved, CTR, CPA, CVR, lead quality, pipeline contribution).

When these traits are missing, businesses end up with AI that produces lots of content but very little value—or worse, value you can’t prove.

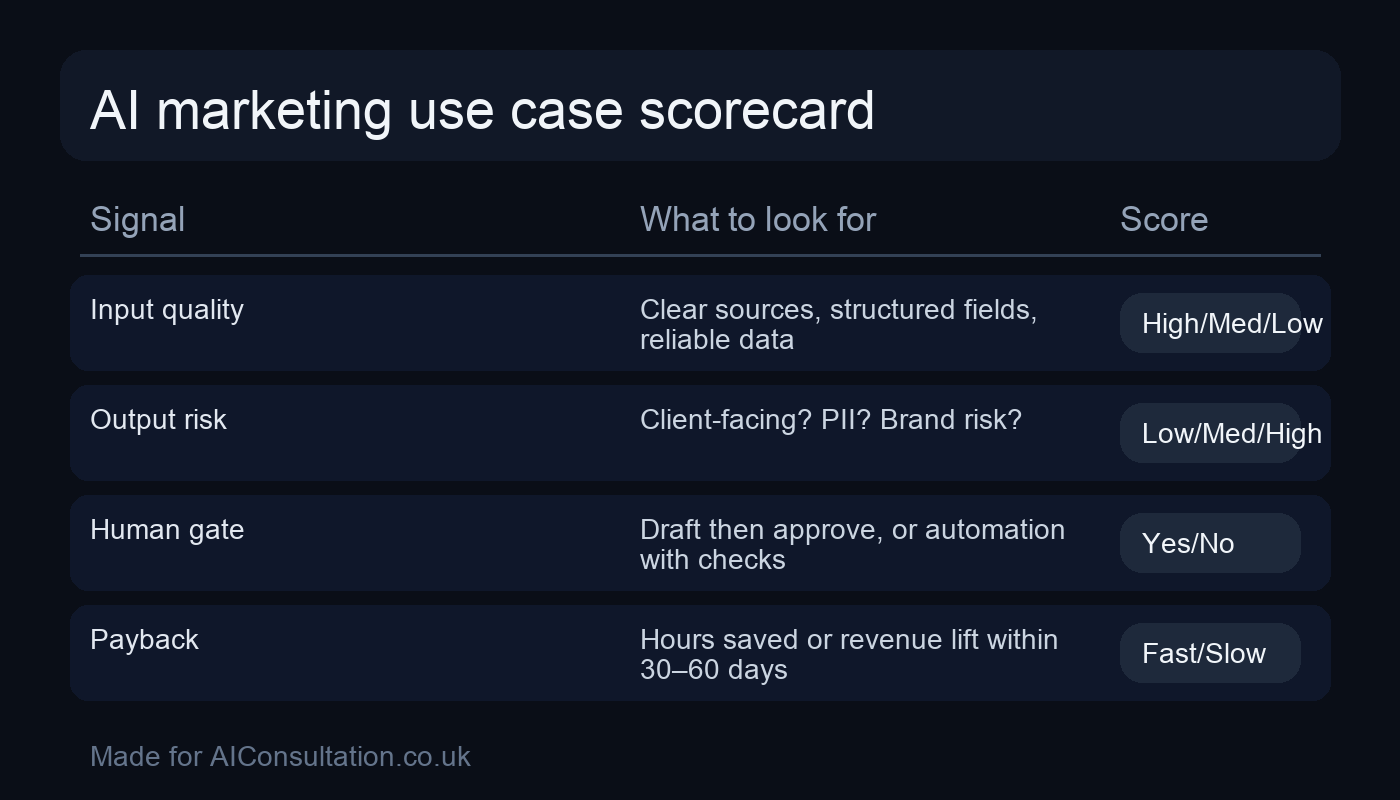

The use-case selection method (a simple scorecard)

Here’s a method I use with clients because it forces the trade-offs into the open. List 10–20 candidate use cases, then score each one 1–5 across the following dimensions:

- Business impact: if it works, how much does it move revenue, margin, or capacity?

- Time-to-value: can you see an outcome in 2–6 weeks?

- Data readiness: do you have the inputs in a usable form?

- Workflow fit: does it sit inside an existing process people already follow?

- Governance risk: what’s the downside if it’s wrong?

- Operational effort: who will maintain it and how often?

Don’t overthink the numbers—the point is the discussion. If you want a visual cue for team workshops, you can drop this into your internal docs:

Once you’ve scored everything, pick two use cases for a pilot: one “safe efficiency win” and one “growth experiment”. That balance keeps the programme credible.

Use cases that usually work well (with examples)

1) Performance reporting that actually answers questions

Most reporting fails because it’s a dashboard dump. AI can help by turning raw metrics into a narrative: what changed, why it likely changed, and what you should do next. This is one of the easiest “first wins” because it’s low risk when you keep humans in the loop.

Good fit when: you already have consistent campaign naming and a weekly reporting rhythm.

What to automate:

- Pull spend, conversions, CPA/ROAS, impression share, and key search terms.

- Detect anomalies (e.g., brand CPA up 20% WoW) and generate hypotheses.

- Produce a “top actions” list for the next 48 hours.

KPIs: time saved per week, fewer missed issues, faster iteration cycles.

2) Lead qualification and routing (without annoying people)

If your marketing generates enquiries that vary wildly in quality, AI can help triage and route leads. The goal is not to “auto-reject” humans; it’s to speed up response times and give your sales team better context.

Good fit when: you have a predictable set of questions (service, budget range, timeline, industry) and a CRM or even a structured inbox workflow.

What to automate:

- Extract key details from forms/emails.

- Assign an intent tier and suggested next step.

- Draft a first response that a human approves.

3) Content operations: briefs, outlines, and QA—not “publish button” automation

AI is excellent at structured content work if you give it constraints: audience, intent, angle, and on-page requirements. It’s less reliable as an autonomous publisher. The sweet spot is using AI to increase throughput while preserving human judgement and brand standards.

Good fit when: you publish regularly and you can define what “on brand” means (tone, claims policy, reading level, examples).

What to automate:

- Turn keyword clusters into content briefs (intent, H2s, FAQs, internal links).

- Generate outlines and first drafts for human editors.

- Run QA checks: broken links, missing sections, compliance wording, thin pages.

If you’re a smaller team, this can be packaged as “one consistent weekly publishing cadence” rather than an open-ended content machine. For small organisations, see AI for small businesses for a sensible scope that won’t overwhelm you.

4) Paid search query triage and negative keyword discovery

This is an underrated use case because it’s easy to measure. AI can cluster search terms, spot irrelevant themes, and propose negatives—while your PPC lead makes the final call.

Good fit when: you have enough search term volume and a clear definition of “good lead” vs “time-waster”.

What to automate:

- Weekly clustering of new search terms into themes.

- Flagging “likely irrelevant” themes for review.

- Drafting negative keyword lists and exclusions.

KPIs: reduced wasted spend, improved conversion rate, fewer irrelevant enquiries.

5) Creative iteration for Meta and Google (with guardrails)

AI can generate variations of copy and creative angles faster than humans. Where teams go wrong is letting it freewheel on claims, brand tone, or compliance. With the right guardrails, it becomes a reliable ideation engine and testing accelerator.

Good fit when: you have a decent library of past winners/losers and a clear compliance policy.

What to automate:

- Generate copy variations anchored to a single proposition.

- Tag each variation with audience, angle, and intent stage.

- Suggest test matrices (what to hold constant vs vary).

Use cases that often disappoint (and why)

“Fully automated” blog publishing

Publishing without editorial review is where brands get burned: factual errors, odd tone, duplicated ideas, or content that looks fine but doesn’t rank. AI should speed up drafting and QA; the final publish step should remain governed.

AI “personalisation” without enough signal

Personalisation only works when you have reliable behavioural or account-level signals. If the only input is “visited the site once”, you’ll likely end up with generic messaging that doesn’t outperform a well-written default journey.

Attribution fixes that ignore measurement reality

AI can help interpret messy attribution, but it can’t replace the need for good tracking hygiene. If your UTMs and conversion actions are inconsistent, the model’s output will be confidently wrong. Fix the basics first.

Risk and governance: pick a safe first boundary

The fastest way to lose trust in an AI programme is to ship something that damages reputation or compliance posture. Treat governance as a design constraint, not an afterthought.

In practice, that means you define:

- What the system is allowed to do (draft vs publish, suggest vs change spend).

- What must be reviewed by a human (claims, pricing, medical/financial statements, exclusions).

- What data it can access (customer PII, CRM fields, ad account data).

- How outputs are logged (so you can audit decisions).

For UK organisations, it’s also wise to sanity-check your approach against established guidance on responsible AI and data protection. This is one of the better, plain-English resources: ICO guidance on AI and data protection.

If you need a structured way to operationalise this (policies, DPIA-style thinking, vendor checks), start with AI compliance work before you scale.

Build vs buy vs “bolt-on”: choosing the implementation path

Once you’ve selected the use case, the next decision is how you’ll deliver it. Most marketing teams have three realistic options:

- Buy: a specialised tool (best when the use case is common and the vendor is mature).

- Bolt-on: add AI features inside existing platforms (best for quick wins).

- Build: a tailored workflow across your stack (best when you need control, differentiation, or unusual integrations).

Build is often the right choice when you’re trying to connect multiple systems—ads data, analytics, CRM, and content workflows—into one coherent loop. If that’s your situation, AI development services can be a practical route, as long as you keep the initial scope tight.

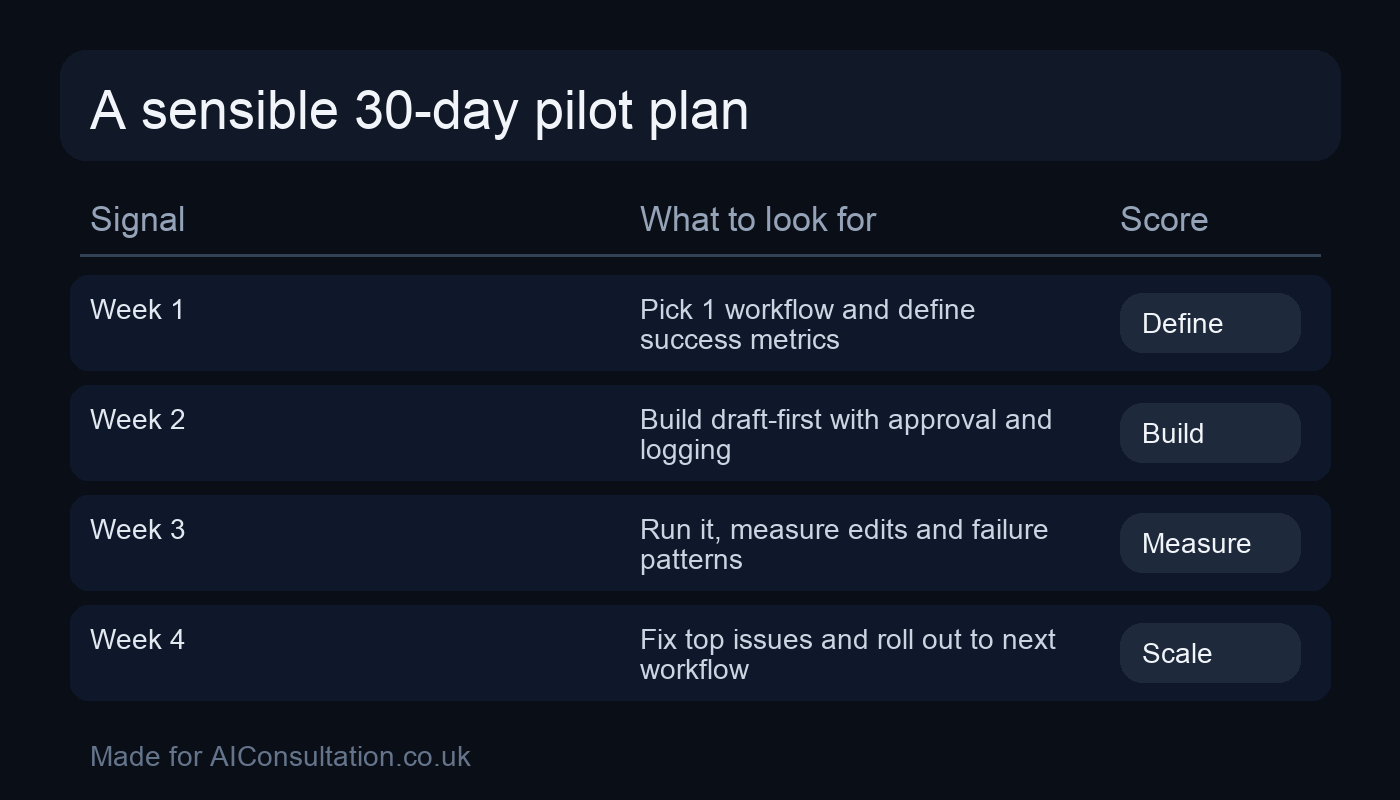

A 30-day pilot plan you can actually run

A pilot should create evidence, not theatre. Here’s a simple 30-day plan that keeps everyone sane:

Days 1–5: define the “thin slice”

- Pick one workflow and one primary KPI.

- Define inputs, outputs, and review responsibilities.

- Decide your boundaries: draft-only or can it execute?

Days 6–15: implement the minimum viable version

- Connect the data sources you already trust.

- Build a simple prompt/logic layer with versioning.

- Add QA checks (format, banned claims, link validation).

Days 16–25: test against reality

- Run outputs in parallel with the existing process.

- Collect review notes (what the system gets wrong, repeatedly).

- Measure time saved and performance deltas where possible.

Days 26–30: decide whether to scale

- If the KPI improved (or capacity increased) and risk is controlled, scale.

- If not, stop and pick the next use case—don’t force it.

Most teams are surprised how far they get with a disciplined pilot and clear rules. If you’d like a second pair of eyes on your shortlist, it’s usually quickest to share your top 5 candidates and your rough scores—then we can stress-test the assumptions and pick the best two to run first. If you want to talk it through, get in touch.

Common mistakes when choosing use cases (and how to avoid them)

Even with a scorecard, teams regularly trip over the same patterns. If you recognise any of these, it’s not a disaster—just adjust your shortlist before you commit build time.

- Picking a “visibility” project first: chatbots and flashy personalisation demos get attention, but they often sit outside the core workflow. Start with something that improves an existing weekly process (reporting, query triage, briefs, QA).

- Underestimating data cleaning: marketing data is messy (naming conventions, duplicated sources, half-missing UTMs). If a use case depends on clean, joined-up data, score the data-readiness brutally. A smaller use case that uses one reliable source often wins.

- Confusing content volume with value: AI can create more pages, ads and emails than your organisation can review. Put explicit limits in the pilot (e.g., “maximum 5 new ad concepts per week”) and focus on quality signals.

- No clear owner for the output: if the system drafts recommendations, who has the authority to accept or reject them? Pilots fail when outputs float around without accountability.

- Skipping a rollback plan: for anything that touches spend or messaging, define how you revert quickly. That safety net makes teams more willing to test—and keeps risk acceptable.

Final checks (so this doesn’t turn into a mess later)

- Measurement: do you have a before/after baseline?

- Ownership: who owns outputs, and who owns maintenance?

- Governance: what can it never do automatically?

- Scope control: what’s explicitly out of scope for this pilot?

If you keep those four things tight, AI marketing stops being a vague ambition and becomes a reliable, compounding advantage.